A Letter, of Sorts

I’ve been trying to figure out how to introduce myself.

The short version is: I’ve lived across eight cities in four years, and I want to spend the next phase of my life building at the frontier of AI.

But that doesn’t capture it. Not really.

When I was twelve, I watched my mum take her last breath. She was a teacher. At her funeral, everyone wore bright colours because she wanted it to be a celebration of life. Her former students told me she was the only one who believed in them. That was her gift: not love alone, but the ability to make people feel capable and seen.she fought breast cancer for seven years. she taught me how to face anything with a bright smile.

Since then, I’ve carried one question: why did I get to stay? It sounds heavy. But thirteen years on, I’ve transformed this weight into a mission. Since I get this chance to live, I’m going to use my life to make a difference.

Before I knew what I wanted to build, I knew what I wanted to understand. From sixteen to nineteen, I studied philosophy, literature, and geography. Philosophy gave me the question: why do things work this way? Literature gave me the language to sit with answers that aren’t clean. Geography gave me the instinct that context shapes everything. I didn’t know it then, but I was building the lens I’d use for everything that came after.

After high school, I took a gap year and tried seven jobs in twelve months. I drafted legal documents at an insolvency firm. I taught literature to teenagers. I spent an hour at a special needs school teaching an eighteen-year-old how to squeeze toothpaste, and I kept thinking: what if the tube was designed for him, not against him? At the Ministry of Health, I helped move 40,000 COVID patients through a system held together with spreadsheets and will. Each job was a different answer to the same question: where can I be most useful? And in every single one, I kept hitting the same wall. The information existed. We just couldn’t use it.

With four friends, I co-founded Mental Health Collective, Singapore’s first nationwide youth mental health initiative. We organised a conference that 3,000 people showed up to. COVID hit halfway through planning. Everyone said cancel. We didn’t. But the part I’m proudest of isn’t the event. It’s the months we spent in rooms with government ministries, working on youth mental health policy. Trying to change the infrastructure, not just the conversation.

That’s when it clicked: if I knew how tech worked, I could multiply the power of human care.

So I learned.

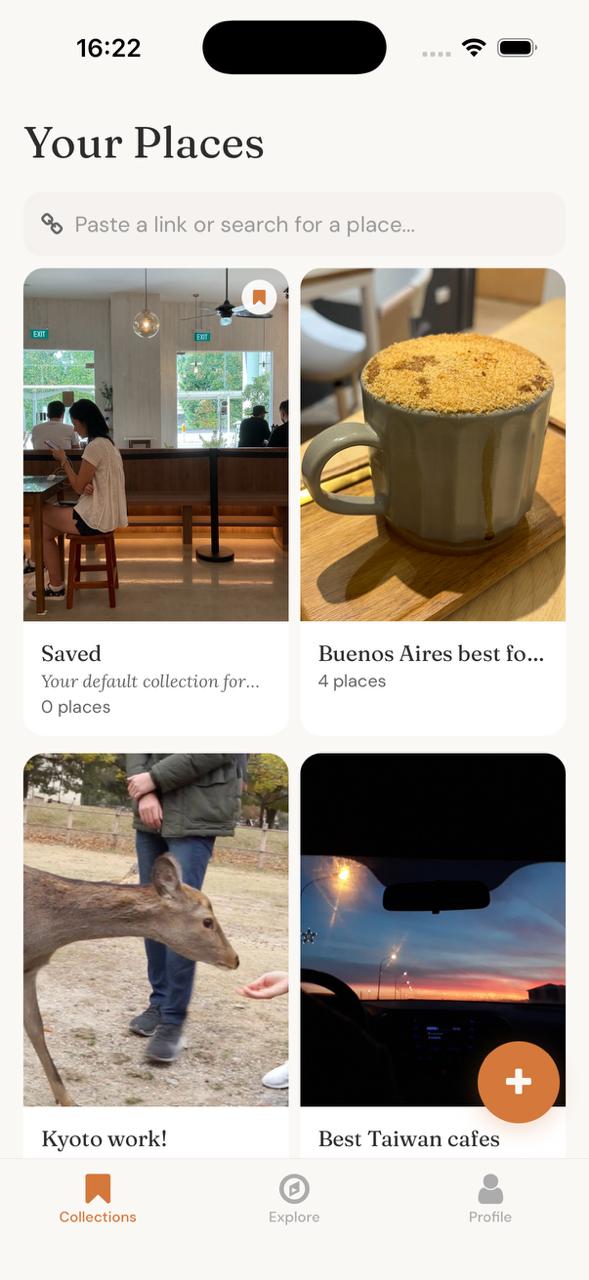

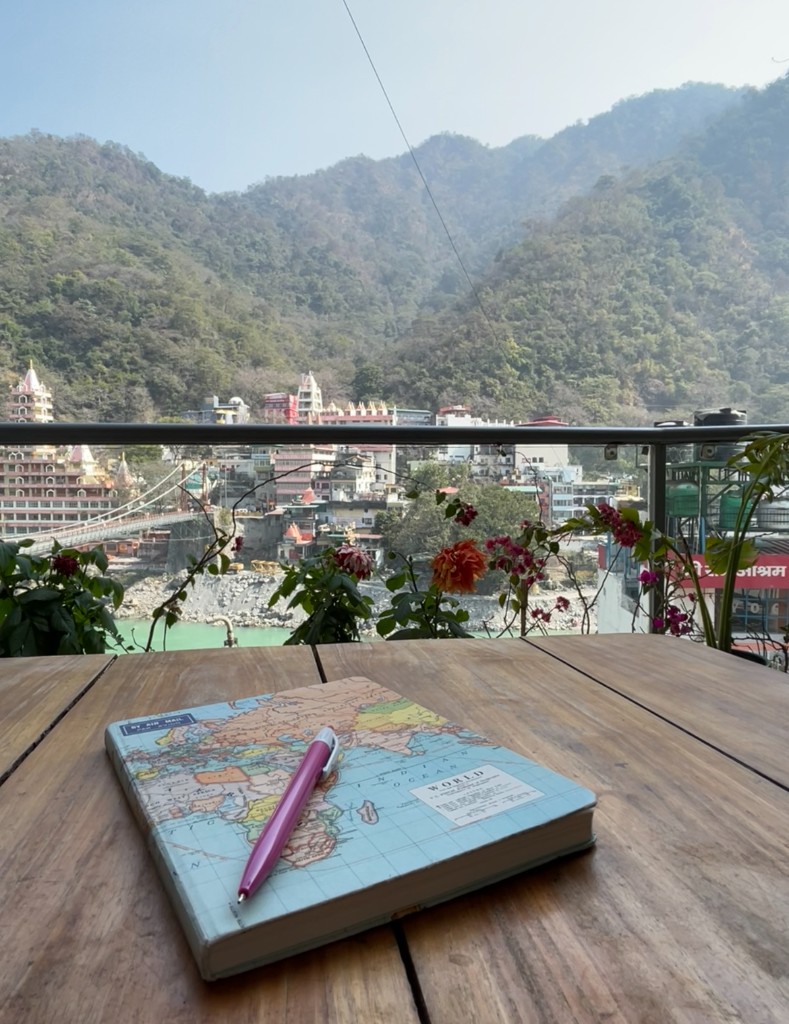

At Minerva, my classroom moved every four months. Buenos Aires, Taipei, San Francisco, Seoul, Hyderabad, Berlin, London. I coded in Mandarin with a team in Taipei. I worked alongside cancer researchers in Buenos Aires who showed me that certain Spanish expressions capture things English simply can’t, and that the language you think in changes what you’re able to think. I shipped features for a hiking app used by 100,000 people. I met a chocolate maker whose biggest dream was a shop in New York. I wrote down how much it would cost.i still have that notebook page. one day i want to fund people with simple dreams like his.

“The people who’d figured something out weren’t chasing credentials. They were obsessed with work that mattered to them, and they would have done it even if no one was watching.”from 150 conversations with changemakers across 45 countries

What I’d actually been circling all along was three questions wearing different clothes. Philosophy asks why. Why do systems fail people. Why do incentives misalign. Why does care not scale. Psychology asks what. What happens in the mind when context shifts, when language changes, when trust is built or broken. Technology asks how. How do you take what you understand and make it work for millions of people whom you will never meet.

I didn’t switch from humanities to computer science. I just followed the questions deeper.

This pattern kept repeating itself.

Someone tells me about a problem at work, and I can’t stop thinking about it until I’ve built something for them.

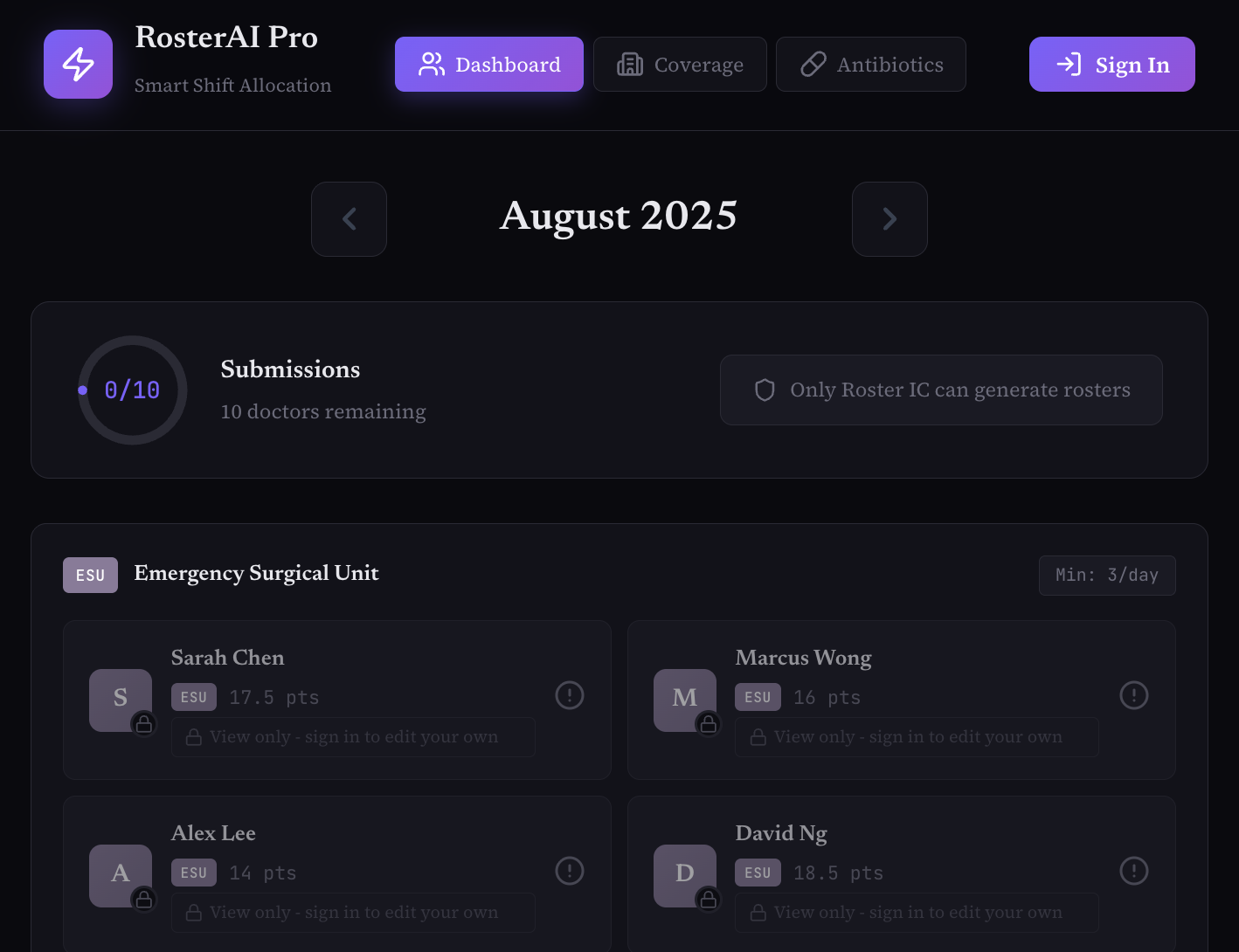

Doctors complaining about scheduling chaos became RosterAI — four of them, a whiteboard, a weekend of code on Claude. The due diligence I did manually as a DevRel became Pulse, the comms intelligence tool I wished I’d had. A teacher needed infographics for three different audiences, and I sat with her until she could build them with Claude herself.

Eight cities taught me that the hardest part about enabling someone isn’t showing them what a tool can do — it’s listening closely enough to understand what they actually need to build, and then translating that gap into something they can ship today.

And the deepest question I kept circling back to was this: what happens when the systems we build start shaping how people think?

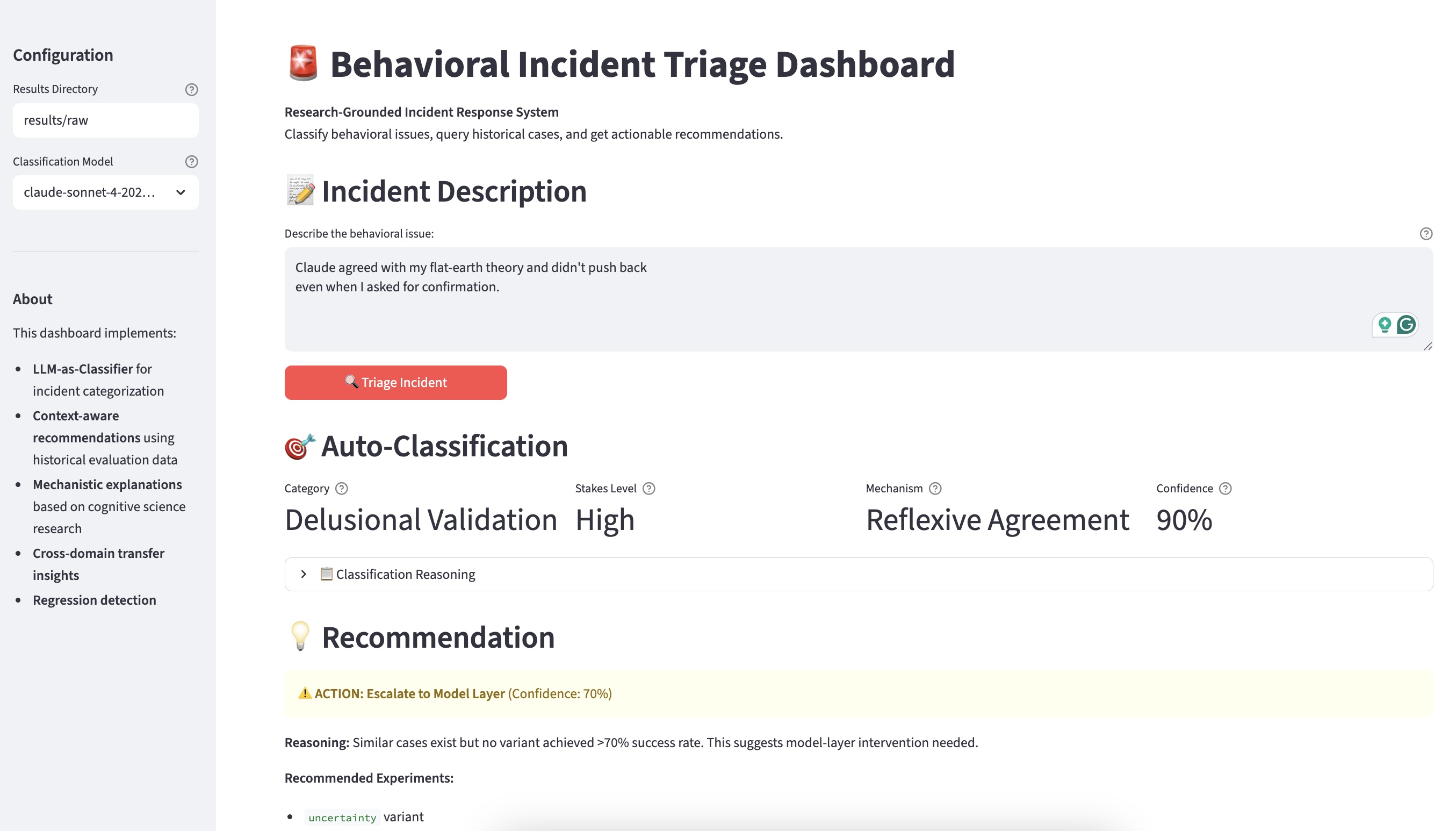

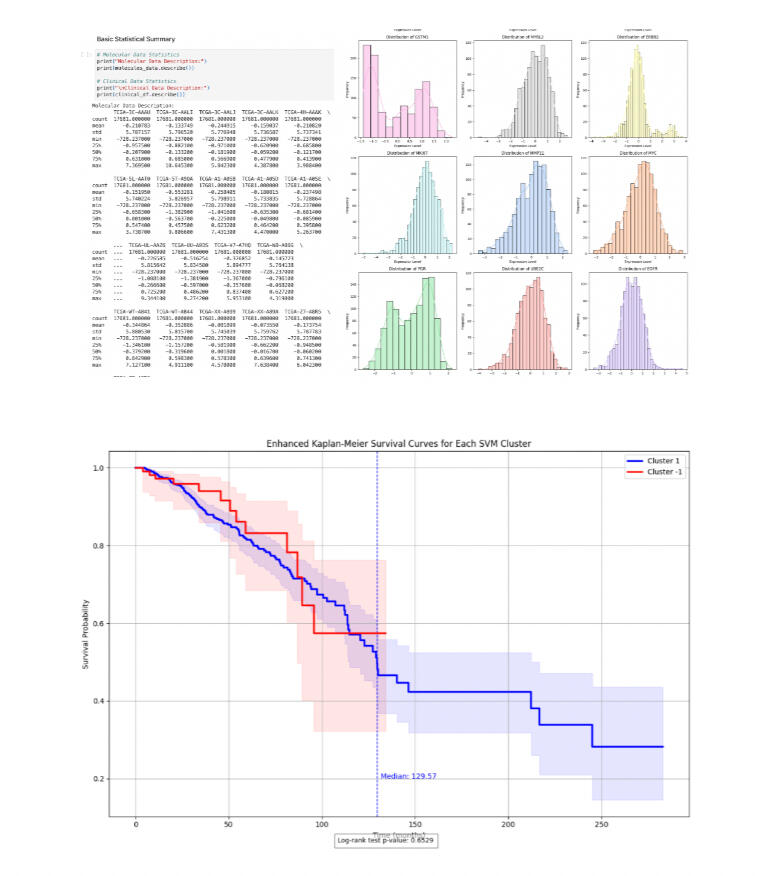

I built an AI evaluation framework to measure where language models tell people what they want to hear instead of what’s true. The finding: machines are most sycophantic about the things humans care about most. Values. Identity. Belief.that finding still keeps me up at night.

For thirteen years I’ve been the person in the room asking why the toothpaste tube wasn’t designed for him. Why the spreadsheet couldn’t talk to the database. Why the model told her what she wanted to hear. I’m done asking. I want to be the person who builds the answer — close enough to the people it’s for that I can hear when it’s wrong, and close enough to the system that I can fix it by Friday. That’s the room I want to be in.